How Ambient AI Scribes Are Changing Medical Coding Intensity

On the surface, AI scribes are meant to reduce the time we physicians spend typing in the EHR so we can focus more on patients. More or less, they are doing that.

But a second benefit is starting to get more airtime. Depending on who you ask, it is either the most exciting thing about these tools or the most troubling:

Ambient scribes are great at coding accuracy.

In this article, I look at how AI scribes are changing coding accuracy and why that matters for physicians.

The Deets: Coding Accuracy

When we see patients, we document what we are treating. We do not naturally think in ICD-10 codes in the exam room (although, maybe some of us do). If a patient walks in with a chronic cough, mild hyponatremia, and a recently changed diuretic, we manage all three, but we might only formally code for one.

Clinical Documentation Integrity (CDI) teams exist because of this gap. Their job is to chase down every billable condition after the fact and make sure it gets captured. It is labor-intensive, inconsistent, and structurally slow. AI can close that gap in real time with ambient scribes.

Ambient scribes listen to the full encounter, capturing every diagnosis mentioned and every condition discussed. Some platforms go further by layering on retrospective coding AI that scans the full chart and surfaces additional billable diagnoses a physician referenced but never formally listed. The ROI numbers from early adopters are significant. One ambient vendor markets roughly $13,000 per clinician annually in recovered revenue.

From a pure documentation standpoint, this is an improvement in coding accuracy, since we were likely undercoding before and AI is correcting that.

Trilliant Health’s Data on Coding After AI Scribe Adoption

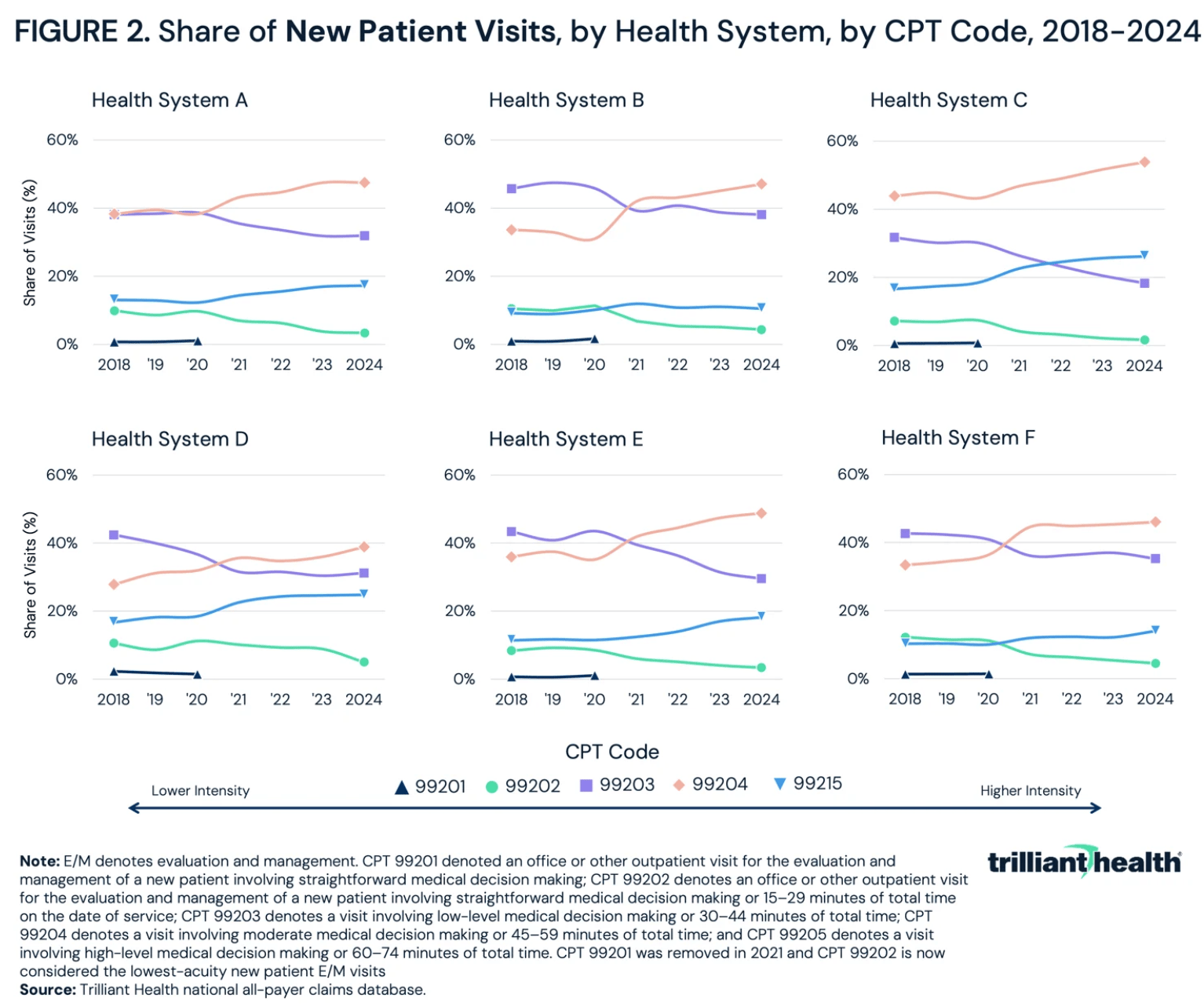

A recent analysis from Trilliant Health examined outpatient E/M billing patterns at six large health systems that have publicly adopted ambient AI scribing, using national all-payer claims data from 2018 to 2024. Across every system, visits shifted toward higher-intensity codes for both new and established patients.

For new patient visits, the share billed at the highest acuity levels (CPT 99204–99205) rose by 12 to 20 percentage points across all six systems (orange line). One health system saw 80% of new patient visits billed at high intensity by 2024. For established patients, the increase ranged from 7 to 12 percentage points. These changes were consistent across geographically and organizationally distinct institutions, all of which adopted ambient AI during the study period.

The increase appeared across nearly every diagnosis category. For factors influencing health status, respiratory disease, and mental and behavioral disorders, coding intensity rose across the board.

Trilliant's interpretation is measured. They argue the increase likely reflects better rules-based documentation rather than fraud. Ambient AI records every word spoken. A tool that captures everything clinically relevant will naturally generate more complete, higher-acuity documentation than a physician typing notes in Epic at 11 PM. And with the 2021 E/M coding revisions that shifted emphasis toward medical decision-making and total time, more encounters now legitimately qualify for higher codes.

Trilliant is also candid about the limits of what its analysis can show:

No control group of health systems that did not adopt ambient AI, which means we can't isolate the technology's contribution from broader secular trends in coding intensity.

It can't distinguish between documentation that accurately reflects clinical complexity and documentation that overstates it. That distinction between accurate coding and upcoding is precisely what's in dispute, and the data alone can't answer it.

A single outpatient visit coded one level higher might mean $10–40 more in reimbursement. Across millions of visits, that compounds fast.

Blue Cross Blue Shield’s Study

In my initial article, How AI Documentation Tools Are Making Upcoding Worse, I covered what Blue Cross Blue Shield's research arm found when it examined the same question across 62 million commercial members.

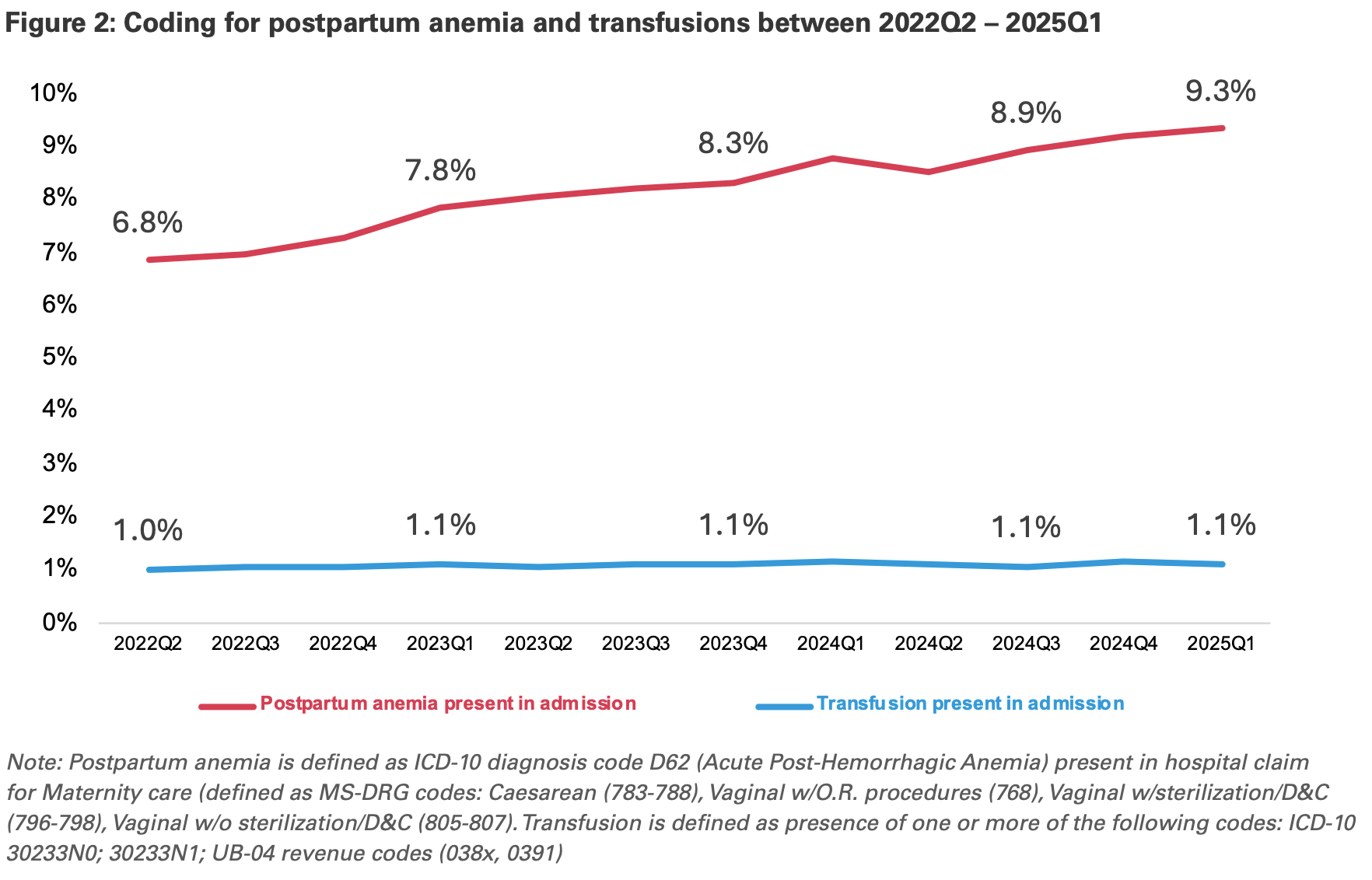

Their analysis flagged postpartum anemia as a case study: coding for that diagnosis tripled at the highest-growth hospitals between 2022 and 2025, a surrogate for AI adoption, while transfusion rates, the standard treatment for clinically significant postpartum anemia, stayed essentially flat. One BCBS plan audited a major outlier hospital system and found that fewer than 20% of cases coded with postpartum anemia actually met established clinical criteria.

BCBS estimated that coding intensity shifts in maternity admissions alone added roughly $22 million in spending over the study period.

The AI tools are not making clinical decisions, but they are doing exactly what they were designed to do: capture everything (Deming’s Principle!). In a fee-for-service system that rewards documentation volume, "capturing everything" and "maximizing revenue" can end up being the same thing.

Dashevsky's Dissection

We're likely to see a cat-and-mouse game, as Bryan Vartabedian, MD mentioned in a comment on my LinkedIn post. These AI scribes and CDI platforms will capture more diagnostic codes, which will increase revenue cycle management activity. Insurers, recognizing that they will have to reimburse more, will probably use their own AI tools to downcode or deny care through prior authorizations. In effect, it becomes AI versus AI. Here is how this could affect patients, physicians, and health insurers.

Patients may not feel this directly at first. But as coding intensity rises and insurers pay out more, those costs will be passed along through higher premiums and, for patients on high-deductible plans, higher out-of-pocket costs tied to higher-coded visits. If payers respond by tightening prior authorization, patients will feel that too through delays, denials, and care fragmentation. The premium increase is the visible part. The prior auth response is where care gets disrupted.

For physicians, provider groups, and health systems, more accurate coding should mean better reimbursement for the services we actually provide. I want to be clear that this is meaningfully different from what we see in Medicare Advantage, where upcoding has been used deliberately to inflate risk scores and capture government dollars without a corresponding increase in care delivered. With ambient AI, the argument is that we're correcting a longstanding undercoding problem and finally getting paid for conditions we were already treating. Up until now, this has been labor-intensive and inconsistently applied. Coding that happens prospectively, during the encounter, is more accurate and more defensible than a CDI team reviewing charts two weeks later.

But here is where it gets complicated for us as individual physicians. Most of us are not seeing the final coded output. The ambient scribe captures the encounter, the coding AI scans the chart, and a claim goes out, often without the physician reviewing what was actually billed. While we signed off on the note, we didn't necessarily sign off on the code. The False Claims Act does not distinguish between intentional fraud and negligent overcoding. If an audit finds that your name is attached to claims that don't meet documentation criteria, the liability question becomes very real very fast. This is something physician groups and hospital legal teams need to get ahead of.

Even when coding is clinically accurate, payers may still deny or downcode claims. A payer's definition of "clinically justified" does not automatically align with what an ambient AI tool documented. So physicians and health systems could find themselves in a position where they coded more completely and correctly than ever before and still face aggressive pushback from insurers who are running their own AI against those claims.

When it comes to payers and CMS, if costs rise, they will offset them through higher premiums, tighter prior authorizations, and more aggressive claim audits. The BCBS analysis I covered earlier is already evidence of this in motion, although that report isn't neutral analysis.

One thing Trilliant noted in its conclusion is that ambient AI creates a time-stamped record of every encounter, which now makes it technically possible to audit whether a code was justified in a way that wasn't feasible before. So it is a tool for accountability and transparency, but it also means there is a paper trail that payers can weaponize in audits that simply didn't exist when we were typing notes after the visit.

In every prior tech arms race in healthcare, from Medicare Advantage risk adjustment to prior authorization automation, the arms race itself became a cost center, and none of it improved care. We're watching the same dynamic start to play out here, just faster and at a larger scale.